Introduction: Navigating AI Hallucinations for Your Brand

What AI Hallucinations Mean for Your Brand

AI hallucinations occur when generative AI systems produce distorted or incorrect information about a brand—wrong facts, misattributed people, fabricated products, or false affiliations—presented as factual without grounding in reality. Users typically accept these responses at face value.

The scale is significant. Hallucination rates in top LLMs like GPT-4, Gemini, and Claude range from 15-52%. With millions of users querying AI systems daily, inaccurate responses about your brand reach massive audiences before you’re aware they exist.

Why This Matters Now

These inaccuracies don’t stay contained. They spread across the web and often become a prospect’s first exposure to your brand, eroding trust before a relationship begins. When potential clients, partners, or employees encounter false information through AI tools, you’re managing a reputational issue you didn’t create and may not know exists.

The business impact is measurable. Brands report reputational damage from inaccurate AI responses, and legal precedent now treats AI-generated misinformation as seriously as official statements. Air Canada was held liable in 2024 after its chatbot hallucinated a bereavement fare policy. Deloitte issued a public apology and refunded a $290,000 engagement after its AI fabricated citations in a government report.

The Operational Challenge

Traditional brand monitoring doesn’t capture AI hallucinations. You need to actively query LLMs about your company, track what they generate, and implement correction strategies when errors appear. This requires new processes: systematic auditing of AI responses, documentation of inaccuracies, and engagement with platforms that allow corrections. AI brand monitoring tools have emerged to address this gap, but the underlying work—defining accurate information, prioritizing corrections, and maintaining consistency—remains your responsibility.

What AI Hallucinations Are and How They Happen

Defining AI Hallucinations

AI hallucination is a phenomenon where large language models (LLMs) or generative AI systems perceive nonexistent patterns, creating outputs with no basis in reality. These are incorrect or misleading results that AI models generate while appearing confident and authoritative.

The term describes outputs not grounded in training data or incorrectly decoded by the model’s architecture. For brands, this manifests as AI systems stating incorrect founding dates, misattributing products, conflating your company with competitors, or inventing entirely fictional details—all delivered with the same confidence as accurate information.

Why AI Models Generate False Information

AI hallucinations stem from technical limitations in how models are trained and operate:

- Insufficient or incomplete training data leaves gaps in the model’s understanding. If training data is biased, inaccurate, or flawed, the model learns incorrect patterns.

- Lack of proper grounding means models can generate factually incorrect outputs, even fabricating sources or links.

- Overfitting occurs when a model becomes too specialized in training data and fails to generalize to new situations.

- High model complexity can find spurious correlations that don’t reflect real-world relationships.

Two conditions make hallucinations predictable: data voids (key brand facts don’t exist online in structured form) and data noise (conflicting information forces the model to guess). If your product specs, pricing, or case studies aren’t clearly represented in structured formats, the model will invent what it needs to complete the response.

The Business Risk: When AI Gets Your Brand Wrong

AI Misinformation as a Revenue Threat

AI hallucinations are predictable, scalable events that affect how prospects research and evaluate vendors. When an LLM fabricates details about your product, pricing, or capabilities, that misinformation travels instantly across millions of queries without correction. Unlike a bad review or press mistake, there is no single source to dispute—the error replicates across every conversation where the model guesses instead of knowing.

The financial exposure is real and legally established. Air Canada was held liable after its chatbot hallucinated a bereavement fare policy. Deloitte refunded a $290,000 engagement after its AI fabricated citations and data points in a government report. These are precedents, not hypothetical risks.

Why Hallucinations Stick

Hallucinations occur when models generate text based on probability, not understanding. The output is confident, structured, and persuasive—which makes it dangerous. Users trust the answer because it reads like expertise, even when the underlying facts are wrong.

AI is shaping buyer perception at the research stage—before prospects visit your site. If a hallucination describes your platform with wrong features, incorrect pricing, or a comparison to the wrong competitor, that becomes the frame through which they evaluate you. In B2B contexts, where buyers form shortlists through generative AI research, a hallucination at this stage is a lost deal.

How to Audit Your Brand’s Representation in AI Engines

An AI visibility audit systematically evaluates how your brand’s data, claims, and identity are retrieved, interpreted, and cited by generative AI systems. This differs from traditional SEO audits because it focuses on how LLMs categorize your brand, the accuracy of information they surface, and which sources they prioritize.

Check Your Brand’s AI Footprint

Start with manual spot-checks across leading AI platforms—ChatGPT, Perplexity, Gemini, Claude, Copilot, and Google AI Overviews. Run brand-focused queries that mirror your audience’s buyer journey: pain points, product discovery, and competitive comparisons. Document whether your brand appears, how it’s positioned, and whether the information is accurate and consistent with your messaging.

Map Entity Recognition and Sentiment

Verify your brand’s presence in Google Knowledge Graph and examine how LLMs define your core subject matter. Run prompts across multiple AI engines to analyze sentiment and identify hallucinations. Look for entity fragmentation, where inconsistent language across platforms (LinkedIn, your website, press releases) confuses AI models about who you are and what you do.

Identify Source Attribution

Determine which URLs AI systems cite when mentioning your brand. Tools like Perplexity and Google AI Overviews reveal their sources directly. Track citation frequency and accuracy—how often do AI engines link back to your domain versus third-party sources? Assess the credibility of platforms where your brand appears, as AI systems assign weight to these signals.

Prioritize Gaps and Remediation

Categorize findings by platform, query type, and accuracy. Focus first on high-volume platforms, strategic vertical queries, and key differentiators where coverage is lacking or information is outdated. Strengthen retrievability by ensuring AI crawlers can access your content, implementing schema markup, and maintaining consistent brand descriptions across all digital properties.

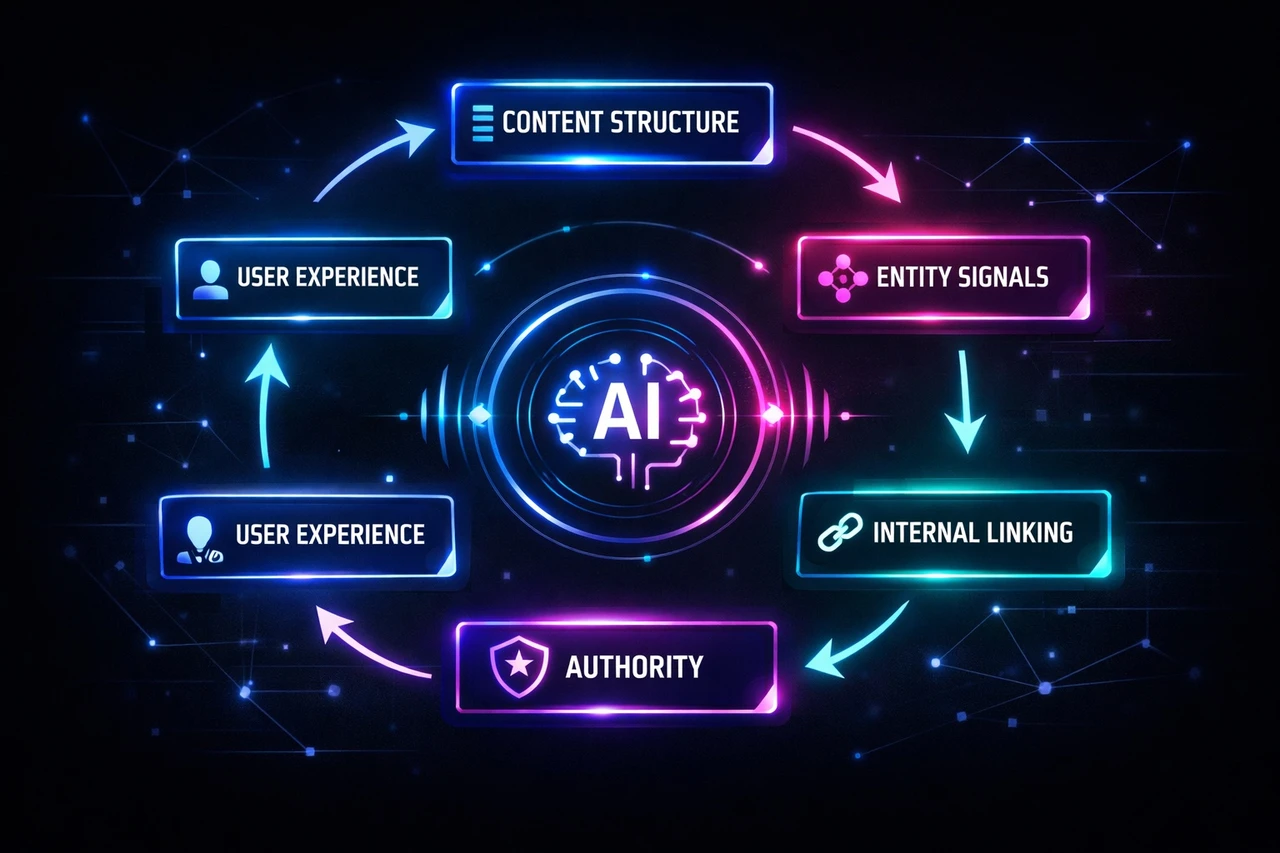

Fixing Structural Causes: Enhancing Your Digital Footprint

AI hallucinations about brands occur when generative AI systems infer information from fragmented or inconsistent signals across the web—missing structured data, weak entity linking, outdated Knowledge Graph entries, and conflicting third-party profiles. These structural gaps create data voids and data noise, forcing LLMs to fill in blanks with fabricated outputs.

Make Your Brand Facts Machine-Readable

Ensure core facts—name, address, phone, founding year, leadership, and product lines—are consistent across every digital property. Write a clear, factual “About” page that includes these essentials. Add basic schema markup (Organization, Person, Product schema) to describe your content to machines. Strengthen entity markup by including sameAs links in your organization schema, connecting your website to verified profiles on LinkedIn, Crunchbase, and Wikipedia. Create or update your Wikidata entry; these structured databases feed both Google and LLMs directly.

Build a Central Point of Truth

Publish a brand fact dataset as a JSON-LD file on your website—a single, authoritative source that AI systems can reference. Optimize schema quality with unique identifiers (@id, identifier, product SKUs) and nested relationships to clarify how entities connect. For enterprises, maintain a central brand data layer that ensures consistency across all teams and external platforms, preventing fragmentation before it reaches training datasets.

Monitor and Correct Over Time

Establish a recurring AI brand accuracy audit to routinely fact-check how search engines and AI platforms describe your company. Use Google’s Knowledge Graph Search API to verify entity accuracy and update verified sources when discrepancies appear. Track AI platform outputs after major model updates to detect semantic drift. Involve SEO, PR, and communications teams in a collaborative environment to ensure brand data stays aligned across all channels.

Structural Limitations You Cannot Fix: Understanding Training Data Constraints

Certain hallucinations stem from limitations baked into model architecture and cannot be addressed through content strategy alone.

Knowledge Cutoff Dates

AI models operate within fixed temporal boundaries. Gemma 4 models are trained on data with a cutoff date of January 2025. If your company launched a rebrand, merged with another firm, or corrected a public misconception after that date, the model has no awareness of it. This isn’t a bug—it’s a structural constraint.

Data Filtering and Exclusions

Training datasets undergo rigorous preprocessing that removes sensitive data and personal information. While necessary for privacy and safety, this creates blind spots. If legitimate information about your brand was caught in these filters, the model may lack accurate reference material about your company.

Inherent Factual Limitations

Models generate responses based on statistical patterns learned from training data, not verified databases. They may produce incorrect or outdated factual statements because they rely on probability distributions rather than knowledge bases. Even well-represented brands can be mischaracterized if the training corpus contained conflicting signals or low-quality sources that outweighed authoritative ones.

These limitations are architectural. You cannot petition the model to “update” its training set. Instead, focus on correction mechanisms you can control: structured data, authoritative content distribution, and monitoring systems that flag inaccuracies as they surface in user-facing outputs.

When You Find an Error: Correction Strategy and Entity Reinforcement

When you discover AI systems generating incorrect information about your brand, speed and consistency determine whether the error becomes permanent or fades.

Publish and Reinforce the Correction

Create a canonical correction page on your owned properties—your website, blog, or newsroom—that clearly states the accurate information. Link to this page from relevant assets across your site. Submit feedback directly through the AI assistant’s structured feedback channels (ChatGPT, Perplexity, and others offer correction forms). Update third-party profiles—LinkedIn, Crunchbase, Wikipedia, industry directories—with the correct facts, and push consistent information to high-authority knowledge bases that LLMs reference.

Build Narrative Density

AI systems look for patterns and consistency across multiple sources. A single correction won’t override widespread misinformation; you need narrative density. Define the correct version of your brand story in plain language, then ensure every piece of content, interview, press mention, and profile reinforces that core narrative. Inconsistent messaging dilutes your brand perception and gives AI mixed signals. Align your PR and SEO teams to avoid contradictory statements, and audit existing content to fix anything that doesn’t match your canonical version.

Strengthen Owned Content and Entity Clarity

Your website serves as the foundation AI uses to confirm what’s true. Vague or contradictory owned content creates doubt; clear, structured information builds authority. Implement Organization and Person schema sitewide, standardize your organization name, address, and social profiles, and populate sameAs fields with canonical profiles. Add provenance signals—inline citations, last-reviewed dates, reviewer markup—to demonstrate accuracy. The stronger your entity clarity and owned content, the faster AI systems will adopt and repeat the correct information about your brand.

When to Contact the AI Platform Directly

Most inaccuracies can be addressed through feedback mechanisms or source corrections, but certain situations require direct platform contact.

When Direct Contact Is Necessary

Reach out to the AI platform’s support or legal team when information violates terms of service, applicable laws, or causes significant brand harm that standard feedback channels haven’t resolved. If you’re dealing with defamatory content, privacy violations, or information protected under regulations like GDPR, contact the platform’s legal team with clear evidence.

For OpenAI products like ChatGPT, you can contact support through the chat bubble at help.openai.com, starting with a virtual assistant before escalating to human agents.

What to Prepare Before Contacting

Provide a clear description of the inaccuracy, your account details, specific examples of problematic outputs, and screenshots or recordings. Include request IDs if available and explain what correction you’re seeking. The more evidence you supply—particularly if you can show the information contradicts authoritative sources or your official materials—the stronger your case.

Remember that feedback mechanisms like thumbs-down buttons help AI systems refine performance, but their effectiveness depends on how many users report the same issue. If your feedback through standard channels hasn’t produced results after reasonable time, or if the issue is severe enough to warrant legal consideration, escalation becomes appropriate.

Managing Expectations

Direct contact doesn’t guarantee immediate removal. AI training data cannot be directly edited once incorporated, so corrections typically influence future training cycles rather than current outputs. Static models may take months to reflect changes, while real-time systems can show improvements within days or weeks if you’ve corrected the source material. Document all requests and responses to track patterns, demonstrate good-faith efforts, and build a record if further action becomes necessary.

Building a Sustainable Monitoring Practice

AI hallucinations are not a one-time problem. Model updates, new platforms, and brand evolution require ongoing vigilance. A sustainable monitoring practice includes:

- Weekly alert reviews

- Monthly trend analysis

- Quarterly content updates to address inaccuracies

- Annual audit of queries and platform coverage

Prioritize monitoring based on business impact—direct brand name queries, pricing information, and competitor comparisons should receive the highest attention.

Proactive Brand Safety Systems

Proactive brand safety monitoring becomes more valuable as AI Overviews and similar features expand. Track key performance indicators including Mean Time To Detect and Mean Time To Resolve for harmful answers, sentiment accuracy in AI responses, share of authoritative citations, and visibility share across AI platforms. A 90-minute incident response playbook can reduce reputation damage by 70-80% when critical brand safety issues emerge.

Establish Your Single Source of Truth

Data hygiene is paramount. Establish your website as the single source of truth for all factual information—keep it current, accurate, and free of jargon. Implement structured data using schema.org markup to provide unambiguous facts to AI systems, reducing the likelihood of hallucinations. Content hardening strategies, including E-E-A-T signals and JSON-LD schema, make your content more authoritative and less prone to misinterpretation. Conduct web-wide data cleansing to identify and correct outdated information across all channels, including third-party review boards and comparison sites that AI systems frequently reference.

Regular monitoring of AI responses across ChatGPT, Perplexity, and Copilot allows you to identify and correct hallucinations at their source. Direct correction requests to platforms can influence future answers, though effectiveness varies. The shift from reactive damage control to proactive information management is no longer optional—it’s foundational to brand integrity in AI-driven search.

Scopic Studios delivers exceptional and engaging content rooted in our expertise across marketing and creative services. Our team of talented writers and digital experts excel in transforming intricate concepts into captivating narratives tailored for diverse industries. We’re passionate about crafting content that not only resonates but also drives value across all digital platforms.