Why Medical Content Ranks in Google but Disappears in AI Search

The Core Problem: Two Discovery Ecosystems, Two Visibility Requirements

Enterprise healthcare brands frequently encounter a counterintuitive problem: they dominate Google rankings for competitive keywords, yet remain completely invisible when patients ask ChatGPT, Perplexity, or Google AI Overviews the same question. This gap is not due to content quality, clinical authority, or SEO fundamentals. It exists because AI systems retrieve information using fundamentally different signals than traditional search engines.

Google’s algorithm rewards links, keyword optimization, and page structure. AI systems operate differently: they interpret structured relationships between entities (organization name, medical specializations, physician credentials, conditions treated), assess source credibility and institutional trust, and synthesize answers using machine-readable signals.

This creates a fundamental distinction: traditional search visibility and AI citation visibility are separate signals. A healthcare brand can have perfect E-E-A-T, clean technical SEO, and strong keyword rankings while remaining completely absent from AI-generated answers—the moment when patients form initial impressions and make early care decisions.

How Patients Now Begin Healthcare Discovery

Patients increasingly start healthcare research inside AI assistants before ever reaching Google or a provider website. They ask conversational questions about symptoms, treatments, and provider recommendations inside ChatGPT, Gemini, or Perplexity. When an AI system generates an answer, it decides—before the patient sees any results—which organizations are worth naming and recommending.

This sequential behavior matters operationally: if your healthcare brand is not named in an AI-generated response, it loses consideration entirely. You don’t lose a click; you lose the patient’s awareness during the high-influence stage of decision-making. AI search is top of funnel in patient discovery, influencing everything the patient evaluates downstream. After receiving an AI answer, patients typically verify findings in Google search and provider websites—but only for organizations the AI already mentioned.

What AI Systems Actually Evaluate

The signals that trigger AI citation are specific and machine-readable: clear organizational profiles with named medical staff and visible credentials, treatment content authored by clinical providers, regularly updated clinical information with publication dates, and structured schema markup that defines medical facts for machine interpretation. Small specialty clinics with less brand recognition often appear more consistently in AI answers than large enterprise brands because their content explicitly defines these relationships through structured data.

AI systems reward entity clarity, knowledge organized for machine interpretation (not persuasion), comprehensive topical coverage, explicit physician and specialty associations, and high interpretive confidence. This is not preference—it’s infrastructure. Without these signals, even accurate, authoritative content gets filtered out or attributed to other sources.

YMYL Over-filtering: How AI Models Prioritize and Exclude Healthcare Content

The YMYL Classification and AI Response Rates

Healthcare content falls under Google’s Your Money or Your Life (YMYL) category, a classification that triggers stricter filtering mechanisms across all AI search systems. YMYL content in healthcare is subject to heightened algorithmic scrutiny because inaccurate information can directly harm user safety and financial outcomes. This creates asymmetric risk: AI systems filter YMYL content more aggressively than other topics, making visibility harder to achieve even for authoritative sources.

Different AI systems handle YMYL queries with varying levels of caution. Google AI Overviews show up for approximately 51% of YMYL queries, while ChatGPT maintains a 100% response rate and DeepSeek operates at 90%. This disparity reveals Google’s deliberate strategy: it generates AI summaries for YMYL content far less frequently than competing systems. Google’s lower response rate isn’t a limitation—it’s a risk management decision. The company evaluates YMYL content more strictly because the cost of being wrong is much higher.

When Google does generate an AI Overview for healthcare queries, it applies precision-focused filtering. The system displays truncation or gaps (marked as “An AI Overview is not available for this search”) for highly sensitive topics, suggesting intentional restrictions to prevent AI summaries from being created for certain content types. This over-filtering approach means healthcare brands can rank well in traditional search results yet remain invisible in AI-driven answers.

Why YMYL Filtering Creates the Invisibility Gap

Google’s filtering mechanism for healthcare content centers on E-E-A-T signals (Experience, Expertise, Authority, and Trust). For YMYL topics like healthcare, these signals carry far more weight than in other industries. When AI generates answers, trust becomes the deciding factor. Google and other AI systems lean heavily on sources they already trust, prioritizing established brands, recognized experts, and authoritative publishers to manage risk.

This creates the core visibility gap: ranking well in traditional search requires standard SEO practices and keyword optimization. Appearing in AI Overviews requires demonstrable, verifiable trust signals that go beyond content quality alone. In healthcare, visibility isn’t just earned through optimization—it’s validated by search engines assessing whether a source is trusted enough to cite to vulnerable users making health decisions.

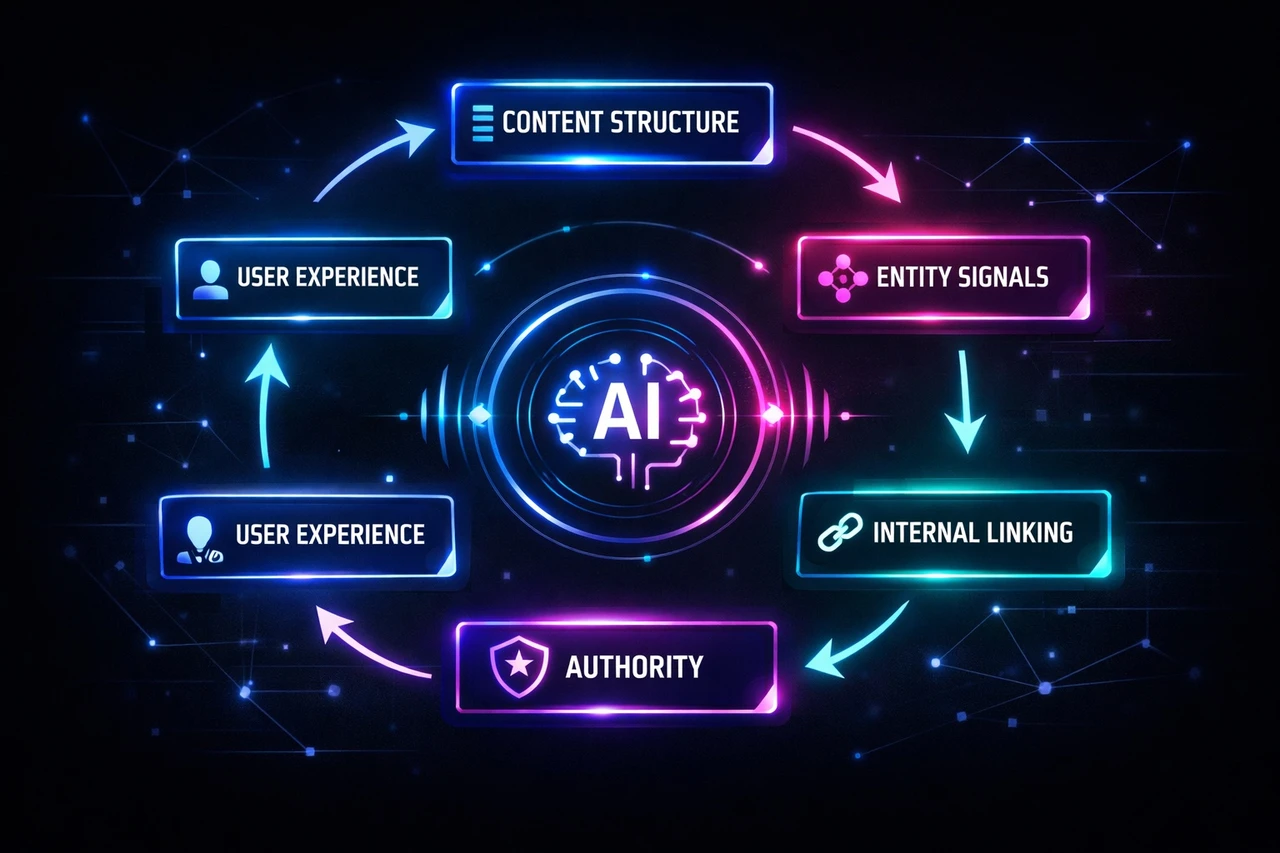

Framework: Building E-E-A-T Signals for Both Google and AI Search Engines

E-E-A-T as a Trust Threshold, Not Just a Ranking Factor

E-E-A-T has evolved from a ranking factor to a trust threshold in AI-driven search. AI systems evaluate credibility based on source credibility, author credentials, topical consistency, and citation patterns. In healthcare specifically, E-E-A-T is a pass-fail requirement, not a ranking signal: you’re not optimizing for preference—you’re meeting a baseline requirement that determines whether your content gets considered at all.

Google AI Overviews appear on 63% of health searches and require high E-E-A-T signals, explicit expertise, strict YMYL compliance, institutional affiliation, clinical evidence, and regulatory compliance. AI systems disproportionately rely on sources demonstrating institutional trust, clinical expertise, and topical authority.

The Four Pillars: What AI Systems Actually Evaluate

Experience: Content that reflects practitioner insight, operational and clinical context, and familiarity with patient journeys is increasingly favored by AI systems. First-person clinical experience carries weight.

Expertise: Clear authorship, visible credentials, medical review disclosures, and editorial oversight are critical for AI systems. Detailed author bylines with board certifications and institutional affiliations signal depth.

Authoritativeness: Brands demonstrating sustained, focused coverage of healthcare topics send stronger authority signals. Backlinks from medical journals, hospitals, and health education platforms reinforce topical authority.

Trustworthiness: Display medical licenses, accreditations, hospital affiliations, editorial policies, and HTTPS security. Transparent authorship and review processes build trust with both AI systems and users.

Structural and Technical Implementation

Structured content with explicit definitions, logical sectioning, descriptive headers, clear author bios, credentials, and citations helps AI systems extract and evaluate your expertise. Healthcare-specific schema markup is critical: use MedicalOrganization, Physician, MedicalCondition, MedicalProcedure, and FAQPage markup to make your E-E-A-T signals machine-readable.

To demonstrate E-E-A-T effectively: use real-world examples, reinforce expertise with credentials and citations, build authority through topical depth and internal linking, and earn trust with transparency and accuracy. Content that aligns with clinical consensus and is supported by reliable external sources—peer-reviewed research, government health agencies—performs better across AI platforms.

Healthcare content fails AI evaluation due to lack of entity authority, missing clinical evidence, and absence of a regulatory compliance framework. The gap isn’t complexity; it’s completeness.

Structured Data Requirements for AI Citation: MedicalCondition, Physician, and MedicalOrganization Schema

The Role of Structured Data as a Digital Passport

Structured data functions as a digital passport for AI systems to verify medical facts without interpreting prose. Rather than parsing narrative content, AI engines read structured markup to confirm credentials, qualifications, and organizational details. Structured schema markup is the machine-readable layer that allows AI systems to extract and verify medical facts independently of content prose. When you implement proper schema, you’re not just optimizing for visibility—you’re enabling machines to trust and cite your content directly.

For medical practices, this means the difference between appearing in AI overviews and remaining invisible. AI systems need machine-readable confirmation of who you are, what you treat, and where you operate. Without it, even accurate content gets filtered out or attributed elsewhere.

Essential Schema Types for Medical Authority

Three schema types form the foundation of AI-readable medical content:

MedicalOrganization Schema tells AI systems your practice type, location, and operational details. Include accepted insurance, service areas, and organizational credentials. This schema answers the baseline question: “Is this a legitimate medical entity?”

Physician Schema verifies individual provider credentials. This markup validates credentials and increases inclusion in AI answers. Without it, provider expertise remains unverified in the machine’s assessment.

MedicalCondition Schema contextualizes the health topics your content addresses. Combined with MedicalProcedure schema, it helps AI systems understand and properly attribute your content. This allows AI engines to match your expertise to relevant user queries with confidence.

Implementation as a Verification Layer

Structured data isn’t decorative markup—it’s a verification layer. When implemented correctly, it allows LLMs to confirm your qualifications and outcomes independently. This reduces the friction between your content and AI citation, making your practice discoverable where traditional SEO alone falls short.

Practitioner vs. Brand Content: Optimizing for AI Responses

How AI Systems Evaluate Brand Authority

Large language models don’t simply rank content—they interpret, prioritize, and summarize information to form a view of your brand. AI sentiment reflects tone, framing, confidence, and context. This means LLMs construct a decisional framework about your brand based on authoritative third-party coverage, owned content, brand consistency, comparative context, and the absence of signals.

The distinction between traditional SEO and AI sentiment is critical for healthcare marketers. Traditional SEO answers whether users can find your brand. AI sentiment answers whether users should trust it. A medical brand can rank #1 in Google search results but still be framed negatively or weakly in AI-generated responses. In high-stakes industries like healthcare, this gap directly impacts patient decision-making and trust.

Building Practitioner-Led Authority for AI Systems

To influence how AI systems represent your brand, healthcare organizations need a deliberate strategy centered on practitioner-led content. LLMs form their view of a brand through:

- Authority-weighted content: Publish insights from practitioners—clinicians, researchers, and subject-matter experts—rather than generic brand messaging. AI systems prioritize expert-authored content over corporate communications.

- Third-party validation: Strengthen coverage in trusted medical publications, peer-reviewed journals, and industry sources. External validation carries more weight in AI sentiment than self-published claims.

- Brand entity clarity: Ensure your organization, practitioners, and specialties are clearly defined across owned and external channels. Ambiguity weakens AI interpretation.

- Comparative narrative control: When AI systems compare your brand to competitors, the framing matters. Shape how your differentiation appears in these comparisons.

AI sentiment often changes before rankings or traffic shift, making it a leading indicator of brand authority. For healthcare marketers, monitoring and optimizing this signal is as important as tracking search visibility—and increasingly, more predictive of patient trust and engagement.

Conclusion: Navigating Healthcare’s New Discovery Landscape

Medical content now operates in two distinct discovery ecosystems. Traditional Google Search and AI-powered search tools like Perplexity, ChatGPT, and Google AI Overviews use fundamentally different signals, citation patterns, and content evaluation methods. A piece of content can rank prominently in Google’s organic results while remaining invisible in AI overviews—or vice versa.

The visibility gap exists because the two systems prioritize fundamentally different signals:

- Traditional search rewards topical authority, E-E-A-T signals, technical SEO fundamentals, keyword alignment, and link authority.

- AI search prioritizes entity clarity, structured schema markup, institutional trust signals, physician-specialty associations, clinical evidence, and interpretive confidence.

These are not competing priorities within a single system—they are separate retrieval mechanisms with distinct evaluation criteria. Optimizing for one does not automatically optimize for the other.

Healthcare brands that optimize only for one channel miss critical patient discovery pathways. Medical brands optimizing only for traditional SEO miss the growing segment of patients beginning research in AI tools. Conversely, content optimized for AI may lack the keyword structure Google rewards.

The brands establishing durable patient acquisition pipelines aren’t choosing between channels. They’re building content systems that perform across both—understanding which signals matter for each discovery mechanism, and ensuring their medical content, expertise, and organizational profile are clearly defined for both human and machine interpretation. This requires parallel optimization frameworks: one for keyword ranking, one for AI citation accuracy and trustworthiness.

The patient discovery landscape has fragmented. Organizations that recognize this shift and adapt their content strategy accordingly will outperform competitors relying on a single approach as search behavior continues to evolve.

Scopic Studios delivers exceptional and engaging content rooted in our expertise across marketing and creative services. Our team of talented writers and digital experts excel in transforming intricate concepts into captivating narratives tailored for diverse industries. We’re passionate about crafting content that not only resonates but also drives value across all digital platforms.